The introduction of the BERT algorithm marked the beginning of a new era in how the world will approach and build search strategies. As a foremost digital marketing company in Dubai, we have experienced the migration of search strategies from static keywords to more “human” keywords. BERT—Bidirectional Encoder Representations from Transformers—is a technique of neural network processing language. For the first time, Google was able to process words in a sentence as they relate to the other words in that sentence, as opposed to processing one word after another. As a result, search engines are able to grasp the subtleties and context of a search like never before.

Why Google Now Requires Context Post-Update

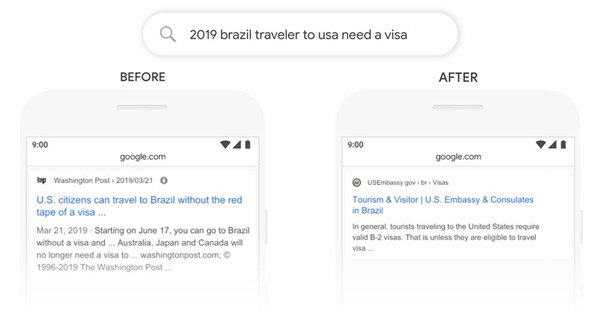

Search engines before the Google BERT update would have a difficult time processing a sentence with a combination of a “preposition” and a sentence that is poorly constructed. Now, the contextual search algorithm is able to distinguish between a group of queries that are alike and differentiate them based on what the searcher actually means.

The Main Pillars BERT Has Changed

The impact of this update is most visible in how Google handles complexity and natural phrasing. Here are the core areas of shift:

- Search Engines and Natural Speech: With voice searching and BERT in use, search engines have become more user-friendly and conversational.

- Enhancing Long Tail Queries: BERT is created to comprehend lengthy and intricate sentences, where one word can have several meanings depending on the surrounding words.

- Featured Snippets and BERT: This enhancement has a huge influence on the content deemed worthy of the prestigious “Position Zero”; With BERT, Google has become more accurate in recognizing which page answers a particular question.

| Feature | Before BERT Search | After BERT Search |

| Processing | Searches based on literal keywords. | Analyzes the context of the entire sentence. |

| User Intent | Literal matching of search terms. | Deeper understanding of the reasoning behind the search. |

| Nuance | Often disregarded prepositions like “to” or “for.” | Accounts for how prepositions alter meaning. |

BERT Content Strategy

At our digital marketing agency Dubai, we are often asked: “What should I do to optimize for BERT?” The answer is not as complicated as it seems: just write for people rather than for algorithms. There are no technical “hacks” for this update. It is about understanding user intent and answering queries as clearly as possible.

- Be Direct: Get straight to the point so Google can identify your content for featured snippets. Modern algorithms prefer concise, direct answers to user questions.

- Write Naturally: Use a conversational style that your customers use to talk to each other and to pose questions. This helps with voice search and BERT optimization.

- Focus on Topic Authority: Treat a particular topic thoroughly so the semantic search in Google can identify your site as an entity that has expertise on the topic.

The Verdict: A Better Search Experience

In the end, the impact of BERT on rankings has been beneficial for sites that value quality and clarity. A specialized search engine optimization company in Dubai can help you develop a content strategy that meets the requirements of the latest algorithm updates for content.

The main objective has shifted from trying to outsmart algorithms to being the best, most authoritative answer for your users. By focusing on understanding user intent, your business can secure a long-term competitive advantage in the UAE’s digital landscape.